The REAL problem with encryption:

you're doing it wrong!

Encryption has made itself famous lately by helping bad guys hide secrets from good guys. If the most powerful supercomputers in the world can't break the mathematical laws of encryption, how can the FBI, NSA and CIA decipher the terrorist communications they intercept?

But there's a flip-side to this question that rarely gets discussed:

If encryption is so unbreakable, why do businesses and governments keep getting hacked?

If terrorists can download an app from the app store that uses encryption to protect their chat messages from the NSA, why couldn't the US Office of Personnel Management, The Home Depot, Target, JPMorgan and Citi Bank (just to name a few) use the same encryption to protect their customer data from hackers? Why do these data breaches keep happening when unbreakable encryption is readily available?

The answer is simple: almost everyone is doing encryption wrong.

There has been an explosion of new healthcare, financial and government applications over the past few years resulting in more and more cryptography being added to backend applications. In more cases than not, this crypto code is implemented incorrectly [1], leaving organizations with a false sense of security that only becomes evident once they get hacked and end up in the headlines.

Mistake #1: Assuming your developers are security experts

"But my company is different," you might be thinking. "Our engineers are brilliant." Unfortunately, even the brightest software developers are usually not security experts. Security experts are mostly found in IT. They're system administrators, pen testers and CISOs; they're not writing code (unless you count scripts written to break into a system).

Software developers are really good at figuring things out; just look at StackOverflow – a massive community of developers helping each other solve challenging problems. So they're not likely to admit to having limited expertise when tasked with something they haven't done before. "I can figure it out," is a common mantra of a good software developer. I should know … I've been coding since I was eight and I say this all the time.

Unfortunately, when it comes to implementing encryption correctly—you don't get a second chance. While a typical developer mistake might cause an error on a web page, a mistake in your data security pipeline can leave all of your sensitive data at risk. Worst of all, you won't find out about the mistake for months or even years until your organization gets hacked. And by then, it's too late.

Mistake #2: Believing that regulatory compliance means you’re secure

"Our application is PCI compliant. So our data is secure," is another misconception that leads to data breaches. Sure, HIPAA, PCI, CJIS and other regulatory compliance rules require that your sensitive data be protected. But they don't go into much detail about how you should do that. Some don’t even specifically mention encryption at all.

There are a lot of ways to get data security wrong and these regulatory guidelines don't hold your hand to make sure you get it right. Even worse, many development teams adding encryption to their code call it a day once they achieve the minimum security needed for a regulatory checkmark. This "checkmark" mentality toward data security is dangerous.

Mistake #3: Relying on cloud providers to secure your data

With the growth of cloud computing more and more server-side applications have moved from server rooms to data centers spread across the globe run by the likes of Amazon, Microsoft and Google. These tech giants are investing hundreds of millions of dollars in cybersecurity to position themselves as “THE” secure cloud. All of this leads a lot of organizations to assume that any data stored by these providers is ironclad. This is a risky assumption.

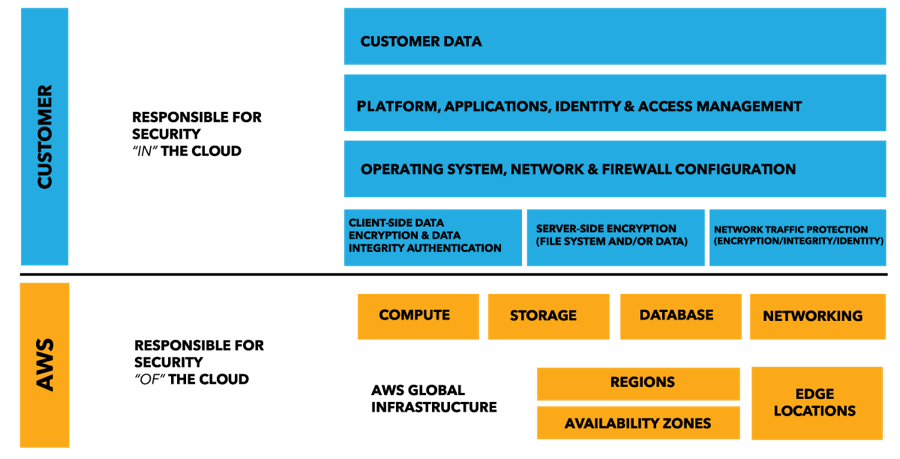

The physical infrastructure powering most cloud providers is secure and some even offer encryption options. However, they always recommend that developers encrypt their sensitive data before storing it in the cloud. Amazon Web Services (AWS) even includes the diagram below to stress that data encryption is the customer’s responsibility, not theirs:

As you can see, a massive amount of data security responsibilities are shouldered upon you. And this is true of any cloud provider.

Mistake #4: Relying on low-level encryption

Protecting your sensitive data with low-level encryption solutions such as disk or file encryption can seem like a tempting one-click-fix. However, many organizations rely solely on these solutions which is downright dangerous.

For starters, disk encryption only kicks in when the server is turned off. While the server is on, the operating system goes about decrypting sensitive data for anyone who is logged in…including the bad guys.

Moving one level up to file encryption, you run into a popular feature called SQL Transparent Data Encryption (TDE) used to encrypt Microsoft and Oracle database files with a single click of a switch. Just like disk encryption, however, this security feature is complely bypassed by a hacker who manages to login to your database. Only the file that stores your database on the physical drive is encrypted so, unless Tom Cruise is rappelling into the data center to steal your physical drive, this isn't going to give you much protection.

Mistake #5: Using the wrong cipher modes and algorithms

Take a look at this Wikipedia list of cryptographic algorithms. Now take a look at the different block cipher modes to choose from. Here's a StackOverflow post on which mode to use with AES. Are we having fun yet??? The point is, there are a lot of variations for a developer to choose from when being asked to "encrypt our sensitive data please."

A common question I get when telling a developer about Crypteron's encryption and key management platform is, "doesn't every application framework come with an encryption library?" Sometimes I just ask them to take a look at the documentation for mcrypt, the encryption library for PHP. Spoiler: it's not pretty. There is a lot of misleading information on the Internet and A LOT of ways to get it wrong:

- Using random numbers that are not cryptographically secure (or, in the case of the Sony PS3 hack, a constant)

- Using AES-ECB mode for data larger than 128 bits

- Reusing an Initialization Vector (IV) multiple times which can nullify the entire encryption process itself

- Using deterministic encryption to make sensitive data searchable without factoring for dictionary attacks.

These examples are just a small snapshot of the vast number of encryption pitfalls. It’s OK if you don’t understand them – most developers don’t either.

Mistake #6: Getting key management wrong

I've saved the biggest mistake for last. Failure to handle key management properly is, hands down, the most common way that sensitive data ends up in the hands of hackers even if it was encrypted correctly. This is the equivalent to buying the best lock in the world and then leaving the key under the doormat. If hackers get your encrypted data and your encryption key, it's game over. Let's go over some key management failures.

Storing the key under the mat

Let's assume that all of your sensitive data is now encrypted and signed properly. Where do you put your encryption key? Some common choices:

- In the database - BAD

- On the file system - BAD

- In an application config file - BAD

Don't forget, we have to assume that the hackers have already broken into your database and application server so you can't store your key there. But most developers do.

Leaving the key unprotected

Even once you find a separate place to store the key, you're still not done because hackers might break in there too. So you need to encrypt your encryption key itself with another encryption key, typically called a Key Encryption Key (KEK), which you then need to store in an entirely different location. For even more security, you can go one level higher and secure your KEKs with a Master Encryption Key and a Master Signing Key. Developers rarely add this many layers of encryption. But they should.

Fetching the key insecurely

Even with three layers of encryption protecting your data, there is still the challenge of transferring the key to your app securely. Ideally this involves authentication between your app and the key management server as well as delivery over an encrypted connection...a fourth layer of encryption. There are also performance considerations such as securely caching the key in memory which can be tricky. These complexities are easy to get wrong.

Using the same key for all your data

Do you use the same key for your house, your car and your office? Of course not. So why would you use one encryption key for all of your sensitive data? You should break up your data into multiple security partitions each with its own encryption key. This is a challenge since it requires you to intelligently determine which key to fetch every time you encrypt and decrypt data. So most developers skip this step.

Never changing the key

Everyone knows that it's a good idea to change the locks every once in awhile and the same is true for encryption. This is called key rotation and it's not trivial. It requires maintaining multiple versions of each encryption key and matching it to the corresponding version of encrypted data. In certain cases, you should migrate your existing data from the old key to the new key...which is even more complicated. So again, most developers skip this step entirely and never change their encryption keys.

Strong data security IS possible

This article isn't meant to be all doom-and-gloom. In fact, it's just the opposite. People are starting to become desensitized to all of the data breaches that keep happening. There is a new sense that getting hacked is inevitable and no data is ever safe. But that's not the case. It IS possible to perform encryption correctly and drastically decrease your chances of getting hacked. If we learn from our mistakes, educate ourselves on data security, and avoid reinventing the wheel, then encryption can be our strongest ally in the fight against hackers.

Scott Arciszewski says:

I find the advice given here to be incomplete. There are even more sinister mistakes you can make.

Encryption provides confidentiality, but not integrity. If your application just encrypts/decrypts data with AES-CBC but doesn’t include an authenticity check, I can replay a carefully-garbled ciphertext and decrypt your message one byte at a time.

Adding integrity to your encryption routine produces something called authenticated encryption; but watch out! There’s this thing called the Cryptographic Doom Principle (CDP) if you don’t do everything properly (encrypt then authenticate).

Then you have side-channels, such as timing attacks. Even if you implement Encrypt-then-MAC to avoid the CDP, if you aren’t comparing the message authentication codes in constant-time, an attacker can slowly build a valid MAC for their chosen ciphertext without knowing the HMAC key. This allows them to (albeit much more slowly) continue to exploit the padding oracle vulnerability alluded to above (the byte-at-a-time decryption).

Then you have to consider birthday attacks against random IVs, weak random number generators (such as OpenSSL’s non-thread-safe userland PRNG), cache-timing attacks against software AES, and a million other ways for a seemingly secure cryptosystem to provide zero security against a dedicated attacker.

Also, don’t use mcrypt. Ever.

Yaron Guez says:

Hi Scott!

Thanks for commenting. I’m a fan of your Paragon Initiative blog. The confidentiality vs integrity issue is definitely an important distinction. Unfortunately, given the length of the article I decided to bundle that distinction into Mistake #5 since it involves using a mode that doesn’t offer authentication / tamper protection.

Joel says:

Hi,

Do you know of any good, comprehensive books on encryption that would contain the information you’ve provided? I essentially want to learn how to implement encryption properly to secure data.

Thank you!

-Joel

Sid Shetye says:

Sure Joel. First – the mandatory warning 😉 … we caution that until you’ve mastered the materials and have experimented by implemented a few security platforms, do not implement one for production use, securing data of any value. This is because there are many subtle things that weaken security. Simplified example: using an equality comparator (==) instead of a constant-time equality comparator (custom implementation) can weaken good cryptography. There are many other cases and you’re aware of all these only with sufficient mastery and experience. Even then implementations can have issues, requiring ongoing updates. (e.g. OpenSSL and Heartbleed).

With that disclaimer, here are some books, in order of recommendation:

1) Applied Cryptography by Bruce Schneier

2) Cryptography Engineering by Ferguson & Schneier

3) Cryptography and Network Security by William Stallings

If you’ve been put off by dense Math equations before, note that mathematical intuition can often take you far in understanding existing ciphers. Of course, when creating ciphers and proving their security, one does need deep math expertise and solid current experience. Happy reading.

Windu Sayles says:

In case anyone is reading this article in 2021 and beyond, the link to “Cryptographic Doom Principle” is out of date. Here’s a working one, as of July 12, 2021:

https://moxie.org/2011/12/13/the-cryptographic-doom-principle.html

Jeff Davies says:

And the problem with the Verisign type approach where you have asymmetric encryption giving authentication to Organisational Root Certifiers and so no, is the problem that if a key is compromised, re-establishing trust is very expensive since most systems are not built with this possibility in mind.

The likelihood of an Organisational root certifier private key being broken is lower now that keys are so much longer, meaning brute forcing with custom hardware like ASICs is probably harder.

One Time Pad, patented in 1917 is the only secure way to exchange very important secrets. Better with a perfect random number generator. I’m actually quite heartened to see products like Yubico: https://www.yubico.com/ (I have no relationship of experience with them). Because it’s based on OTP, even if Yubiko usb key was intercepted and the codes extracted, unless the next yubico key is also intercepted, then trust is re-established. Note there is a client usb-key and server usb-key and the keystore code is run on a seperate microcontroller on the usb key by the looks of it.

h4nna says:

I’m absolutely agree with everything you said,

Very smart advise

Thankyou

anonymous says:

good article

Megan, D says:

Great read, but one suggestion. You failed to mention FPE or format preserving encryption. Using FPE, you can encrypt sensitive information, even while in use. So even a user that presented valid credentials would only see protected data.

This is the future of encryption. It allows for the adoption of a “least privilege” model. While not all data can be protected this way, the sensitive information (the stuff you read about being stolen) can be. Data at Rest is still important but you said it yourself, the chances of disk or complete array walking out of your data center are limited.

Arno Laenen says:

Hello,

I have a question about this post. I am making an essay and I want to use this. But I need to give your name and the publishing date. Can you please give me the year in which you wrote this article?

Yaron Guez says:

Hi Arno, my name is Yaron Guez and this was published in 2016.

Sunil Gupta says:

Hi Yaron

A great article with very apt information. Curious to know whether you have explored the merit of using “Quantum Key Distribution” to distribute public keys as photons in an absolutely unhackable way.

Thanks

Sunil

Yaron Guez says:

Hi Sunil,

QKD helps distribute a *shared* key between *two* parties such that anyone else listening will be detected immediately. The drawbacks are that they are point-to-point links versus hub-and-spoke. So for N entities, you need N(N-1)/2 QKD links which becomes unmanageable exponentially. Also link lengths are typically limited to just a few hundred kilometers, limiting their geographic reach. Lastly, to distribute a *public* key (as you asked) you can do that over a public channel. No need for quantum anything. I hope that’s helpful!

Ludovic Rembert says:

Hi Yaron,

Thanks for the post. I’m using a form of AES, but wondering which encryption mode to choose. I’m leaning toward OFB, because I’ve been told by other network engineers that it takes up less space, which corroborates some of the answers in that Stack Overflow post you linked to. However, OCB also looks appealing though it appears to be protected by patents (?). Which do you prefer?

Kindly,

L

secureDeveloper says:

Great summary! Thank you.

//quote

Where do you put your encryption key? Some common choices:

In the database – BAD

On the file system – BAD

In an application config file – BAD

//endquote

Could you add “As an environment variable” to this list? So I can have a clear reference to counter the mid-level developers who think they’re smarter than a good hacker? And maybe “java system properties”?

I continue to see these used for the encryption-key. And, similar to you, label this as “hiding the key to your house under the doormat, or probably better, hanging the key to your house on the front door”.

thanks again

Russel says:

As always, the human factor and social engineering lead to all sorts of leaks.

Daman says:

i have a question, i am on a website that sends encrypted data,

my browser is supposed to decrypt that data every time i login,

How is the key sent to my browser? i intercept traffic between the server and my browser and can view all transactions and all requests, u can do that with most browsers now F12 on firefox will show you all traffic .

The question is, the server HAS to send the key to my browser, right ? so where is the protection here ?

Twisted_Code says:

Disclaimer (keeping in mind mistake #1 of this article’s list): I’m a software engineer, not a security expert.

That assumption clarified, and also assuming I understand your question correctly, I’ll answer your question as I understand it. (but first, one last assumption to limit the scope of my answer: I’m also going to assume both your browser and the Web server you’re communicating with are using today’s “best practices” and not some alien technology or something cooked up by the NSA. If you happen to be an alien or NSA agent, you probably already know the answer, right?):

1. The connection is probably encrypted using TLS. If you want to be sure, check whether the protocol is HTTPS and/or shows a lock icon next to the web address. If you want to be even more sure about the details (as I’ve done on a few occasions when uncertainty arose), many browsers such as Firefox even let you view the full TLS certificate the browser received from the server (which in most cases will be automatically validated by the browser before displaying the page, by the way).

2. TLS (Transport Layer Security, a technology based on SSL and sometimes still called by that name) uses asymmetric cryptography (sometimes called public-key cryptography), in conjunction with identity certificates distributed by certificate authorities (CAs), to exchange a short symmetric key.

3. Asymmetric cryptography, in a nutshell (and you should really look into it if you have any further questions about this), relies on what’s known as a “trapdoor function” to make it so that one person with a public key can encrypt but not decrypt data they want to send, while the recipient can decrypt it using a secret key mathematically related to the public key. I’m really simplifying this, and honestly don’t FULLY understand how things like RSA and Elliptic Curve work (2 popular variations on asymmetric cryptography)… but hey, I already told you I’m not an expert.

I hope that helps you feel little bit safer about your connection. I also recommend doing some googling over these topics to get a better understanding, because I certainly can’t cover everything I’ve learned in a single comment, sadly.

Wishing you the best,

~Twisted Code

ISO 27001 Implementation says:

Hello, thanks for the sharing this blog, this is very helpfull for me.

AlbertDus says:

Hello This post was huli Good luck :